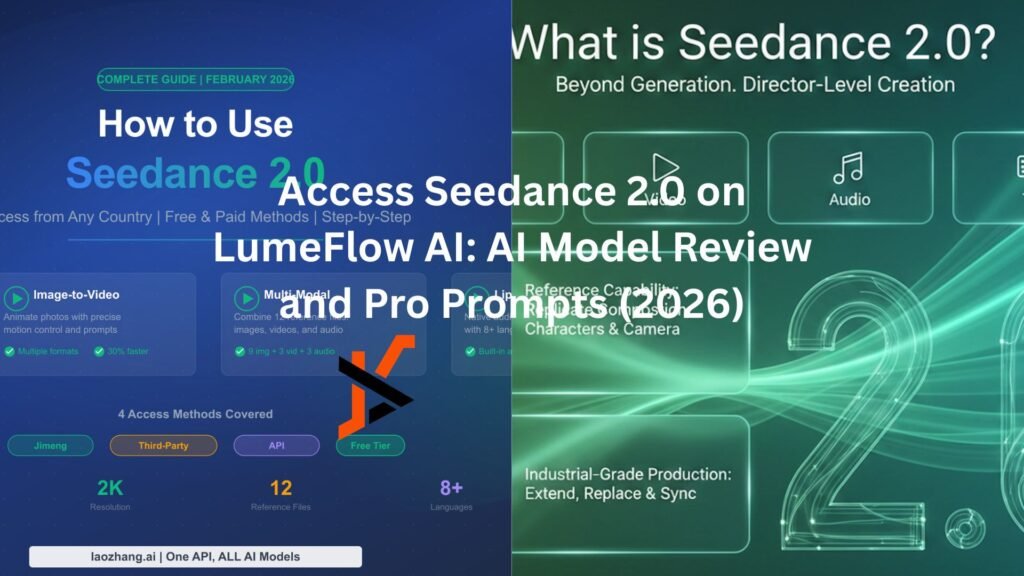

AI video tools have come a long way since the novelty demo days. Seedance 2.0, ByteDance’s latest AI video model released in February 2026, stands out from the crowd. Its quad-modal input system handles more than just text prompts. Native audio generation removes the sync headache. Motion imitation and director-level camera controls give creators actual say over the output.

The access situation makes this more interesting, this AI model made a buzz without access. But don’t worry, LumeFlow AI gives you access to Seedance 2.0 with an authentic version, and that fact alone changes how this story should be told.

How Seedance 2.0 Compares to Sora 2 and Kling 3.0

Most discussions about AI models focus on benchmarks and capabilities. Authorization rarely comes up. Skip that approach with Seedance 2.0.

ByteDance runs tight distribution. Seedance 2.0 currently only supports the China region. International users need platforms with Authentic Seedance—gray-market options exist but come with obvious risks.

LumeFlow AI operates as a platform with Access to Seedance 2.0, not a workaround.

What does this mean in reality?

- The connections stay up. No waking up to find the service dead or API keys revoked.

- Feature completeness is guaranteed. Some third-party platforms cut corners, running limited versions of the model without advertising it. Platforms using the Authentic version deliver the full package.

- Generation times average 3-5 minutes through LumeFlow AI. Industry standard runs 10 minutes to several hours for comparable quality.

- Output arrives without watermarks. Videos are ready for client delivery.

Seedance 2.0 vs Sora 2, Kling 3.0, and Veo 3.1: Core Features

Quad-Modal Input: Beyond Text-Only AI Video

Most AI video tools process text prompts and nothing else. Seedance 2.0 accepts four input types simultaneously: text, images, video clips, and audio files.

Maximum Supported References:

● Nine images

● Three video clips (up to 15 seconds combined)

● Three audio tracks (up to 15 seconds combined)

● One text prompt

This setup lets users show the model what they want rather than describe it exhaustively in writing.

Image references work as visual anchors. Drop in a face, a style example, a location, or a product shot. The model incorporates these directly into the output.

Video references handle motion. Upload footage of a specific walking pattern, gesture, or dance move. Seedance 2.0 extracts the movement and applies it elsewhere.

Drop in an audio track, and the visuals keep pace. Camera movements and scene transitions fall in line with the musical beat.

Text prompts still matter. They set scene context, emotional tone, and handle nuances that visual references cannot cover.

Results improve significantly when you can demonstrate instead of describing.

Native Audio Generation in AI Video: Beats Kling 3.0 and Veo 3.1

This feature surprises people who have not used Seedance 2.0. The model generates audio alongside video—music, sound effects, ambient noise—all synchronized to the visual content.

No add-ons or post-processing required. Audio generation happens during the core generation process.

Lip sync performs reliably. Character mouth movements align with generated speech coherently.

Audio layering makes sense naturally. Background music does not overpower dialogue. Sound effects trigger with visual events. The model grasps audio hierarchy.

One pass produces video and audio ready for use.

Motion Imitation in AI Video: Smoother Than Sora 2

Movement in AI videos often looks wrong. Characters float. Physics breaks down. Everything feels weightless.

Motion Imitation fixes this by learning from real footage. Upload a video of any movement—a person walking, hands gesturing, a dance sequence—and the model extracts the underlying motion characteristics.

Walking patterns transfer with real pace and weight. Hand gestures feel grounded. Full-body choreography replicates accurately. Camera movements acquire micro-adjustments that make footage feel operated.

Depth of field shifts naturally during motion. Focus changes happen as they would with a skilled camera operator, and this detail separates professional output from obvious AI junk.

Character consistency holds throughout clips. Faces, posture, and proportions remain stable. Single-character content shows no frame-to-frame inconsistency.

Camera Control in AI Video: Director-Level Precision

Seedance 2.0 gives control over elements that typically require editing software.

Lighting responds to references. Upload an image with specific lighting, and the model applies similar conditions. Shadows and highlights behave correctly.

Tell the model what you want in words or show it through a reference image. Either way, wide shots, close-ups, rule-of-thirds—these all happen as described.

Dollies, pans, tilts, tracking shots—Seedance 2.0 knows what each one means. The model does not guess. It executes the movement as specified.

Visual style transfers through image references. Film noir, commercial brightness, dramatic mood—all achievable with the right reference images.

Prompt adherence is strong. Camera instructions in text prompts execute faithfully. Most AI video tools ignore this kind of direction.

Pro Seedance 2.0 Prompts: Cinematic, Cyberpunk, Anime and More

These AI video prompts have been tested with Seedance 2.0 through LumeFlow AI.

● Cinematic Hollywood Transformation

Prompt:

“An underwater scene transitions to alien transformation. A woman begins dissolving into luminous particles. Her body mutates into an otherworldly insect-creature hybrid with translucent wings and bioluminescent details. Macro cinematography captures the transformation: antennae emerge, exoskeleton forms with iridescent sheen, insectoid eyes develop. The creature takes flight through bioluminescent waters. Style: sci-fi, alien, bioluminescent, cinematic, otherworldly.”

For movie-style openings, brand films, and ad-quality visuals.

● Cyberpunk/Sci-Fi

Prompt:

“A woman stands on a cyberpunk rooftop at night. Holographic billboards flicker, flying vehicles cross the neon sky, wet surfaces reflect colorful lights. Inspired by Blade Runner aesthetic: teal-orange color grading, volumetric fog, cyan-magenta neon. Camera slowly tilts up as she gazes at the sprawling metropolis. Mood: dystopian, immersive, cinematic.”

For tech content, game promotion, futuristic themes.

● Anime Style

Prompt:

“A Japanese anime romance scene. A character stands in a school courtyard filled with cherry blossoms. Pink petals drift through the air as she gazes up at the blooming tree, smiling softly. Warm sunlight filters through the branches, creating a dreamy golden atmosphere. The moment captures a perfect spring day. Style: anime, romantic, warm golden tones, soft lighting, cinematic slow motion.”

For anime content, animation ideas, fan content.

● TikTok Viral Content

Prompt:

“A satisfying video of ice cream being scooped and placed in a cone. Top-down view, slow motion. Bright, vibrant colors, clean white background. Transitions: soft dissolve. Mood: ASMR-like, satisfying, shareable.”

For short videos, product showcases, viral content.

● Commercial Advertising

Prompt:

“A luxury watch close-up rotating on a velvet display. Studio rim lighting catches the metal edges. Inspired by Rolex/Omega commercials: elegant, minimal, high-contrast. The camera slowly pushes in. No watermark visible. Mood: premium, aspirational.”

For e-commerce, brand ads, product presentations.

Pro Tip: Upload multiple reference images using @mentions for Seedance 2.0’s multi-image input. Then add timestamp instructions like “0-5s: character @image1 walks in @image2 garden, 5-10s: camera pans to @image3 sunset, 10-15s: character looks at camera and smiles.” This gives you frame-perfect control over complex scenes.

Final Thoughts on Seedance 2.0 AI Video

Seedance 2.0 through LumeFlow AI combines legitimate capabilities with reliable access. The quad-modal system handles more than text-only alternatives. Native audio removes sync work. Motion imitation and camera controls give real creative direction.

Access to Seedance 2.0 through LumeFlow AI addresses the reliability concerns that come with gray-market access. Stable connections, complete features, and actual support matter for production work.

Content creators, marketers, and video producers working with AI tools seriously should evaluate this combination.